ClickHouse's Object Storage Search Overhaul

Alps Wang

Mar 25, 2026 · 1 views

Object Storage's Latency Challenge

The ClickHouse blog post provides an excellent deep dive into the technical redesign of their full-text index to optimize for object storage. The core innovation lies in shifting from random access patterns, which are detrimental to object storage latency, to sequential access patterns. This is achieved through a block-based dictionary layout with front-coding compression and a sparse index that remains in memory. The adaptive use of Roaring bitmaps, VarInt encoding, and embedded posting lists for different cardinalities is a sophisticated approach to balancing storage efficiency and query performance. The emphasis on minimizing random I/O is a crucial takeaway for anyone building scalable search solutions on cloud object storage. The article clearly articulates the problem and presents a well-reasoned, technically sound solution.

However, a potential area for deeper exploration would be the trade-offs introduced by this new design. While performance on object storage is clearly improved, the article mentions that the new layout can also be used on local disks without regressing performance. It would be beneficial to quantify this comparison more explicitly. Furthermore, while the concept of embedded posting lists for very low cardinality tokens is elegant, understanding the exact memory footprint and potential impact on cache locality for the dictionary itself, especially with very large dictionaries, would be valuable. The article hints at this with the 'accidental but near-perfect empirical fit' for the threshold of six, but more data on how this scales with extremely diverse datasets and query patterns could be illuminating. Finally, while the article is technically detailed, more concrete examples of query performance improvements (e.g., percentage speedups, latency reductions) would further solidify the impact of this redesign.

Key Points

- ClickHouse has redesigned its full-text index to optimize for object storage, addressing latency bottlenecks inherent in remote storage.

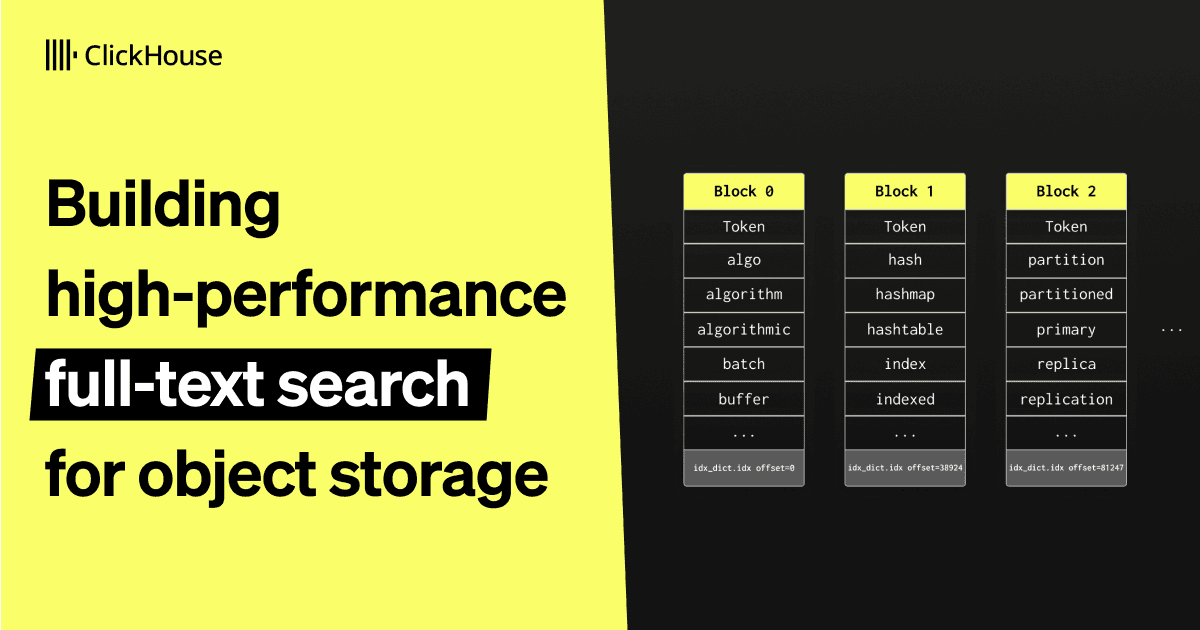

- The new design prioritizes sequential access patterns over random reads, utilizing a block-based dictionary layout with front-coding compression and an in-memory sparse index.

- Different posting list representations (Roaring bitmaps, VarInt, embedded) are employed based on token cardinality to balance storage efficiency and query performance, with a focus on minimizing I/O for frequent, low-cardinality tokens.

- This redesign aims to maintain high performance for full-text search on object storage without compromising performance on local disks.

📖 Source: Building high-performance full-text search for object storage

Related Articles

Comments (0)

No comments yet. Be the first to comment!